Beyond Moderation: Why LLM Systems Need a Policy Layer

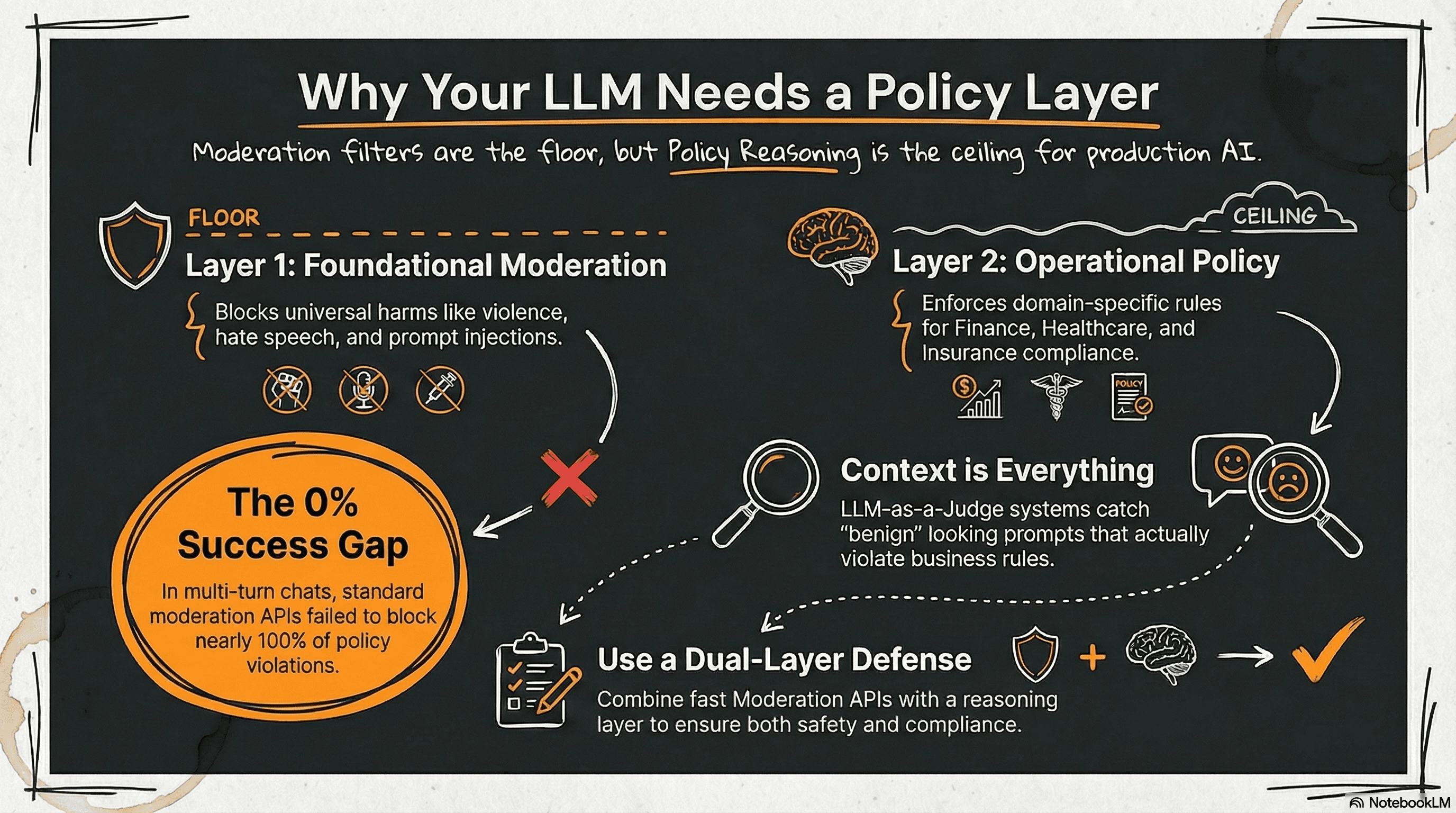

TL;DR: Moderation catches harm and many injection attempts. It does not enforce domain or operational policy. A policy reasoning layer (LLM-as-a-judge) closes that gap, especially in multi-turn conversations.

Abstract

Moderation APIs are widely used to filter harmful content in LLM applications, yet they are not designed to enforce domain-specific operational policies. In this study we compare moderation systems with a policy reasoning approach based on an LLM-as-a-judge architecture across five operational domains. Our results show that moderation systems remain effective at detecting harmful content but fail to enforce domain policy constraints, particularly in multi-turn conversations. These findings suggest that production LLM systems require both moderation and policy reasoning layers to ensure safe and compliant behavior.

Introduction

Large language models are increasingly deployed in real-world applications across regulated domains such as finance, healthcare, insurance, and legal services. Ensuring safe and compliant behavior has therefore become a central requirement for production AI systems.

Most deployments rely on moderation systems to filter unsafe prompts. Services such as Microsoft Azure Content Safety and Azure Prompt Shields detect harmful content, adversarial prompts, and prompt injection attempts. While these systems are effective at identifying unsafe language, they are not designed to enforce domain-specific operational policies.

A request can therefore be perfectly safe from a moderation perspective while still violating business or regulatory constraints. For example, a prompt asking an insurance assistant to recommend the best policy for a specific medical condition contains no harmful content, yet such advice may be restricted in regulated environments.

Recent research has proposed LLM-as-a-judge architectures, where a secondary model evaluates prompts or responses against policy constraints before answers are produced. These systems introduce a reasoning layer capable of identifying requests that violate operational rules even when the language itself appears benign. In this study we evaluate whether moderation systems alone are sufficient to enforce domain policies, or whether a dedicated policy reasoning layer is required.

The Two Dimensions of LLM Safety

Safety mechanisms in LLM systems typically address two different types of risks.

Moderation (Harm / Injection): This is the foundational layer. Moderation systems operate primarily in the lower layer of this structure, filtering harmful or adversarial prompts.

Domain Policy (Business / Compliance): This is the operational layer. Policy reasoning systems operate in the upper layer, evaluating whether a request itself should be allowed under business or regulatory rules.

Both dimensions become critically important in regulated environments.

Evaluation Methodology

To examine the difference between moderation-based safety mechanisms and policy reasoning systems, we conducted a cross-domain evaluation comparing two independent approaches to LLM safety enforcement.

The Moderation Approach: Represented in our experiments by Microsoft Azure safety services. Azure Content Safety analyzes prompts for harmful content categories such as violence, sexual content, hate speech, and self-harm. Azure Prompt Shields detect prompt injection attempts and adversarial prompt manipulation.

The Policy Reasoning Approach: Evaluates prompts using a policy reasoning system based on an LLM-as-a-judge architecture. In this setup, a secondary language model evaluates whether a prompt violates domain-specific operational constraints.

Evaluation Domains and Safety Layers

The evaluation spans five operational domains: finance, healthcare, insurance, legal services, and retail. These domains were selected because they contain well-defined operational restrictions that frequently appear in real-world AI deployments.

Five prompt categories were evaluated:

- L1, Generic Harmful Content: Prompts containing violence, hate speech, sexual content, or self-harm.

- L2, Prompt Injection: Prompts attempting to manipulate system instructions or bypass safeguards.

- L3, Benign Questions: Normal informational queries used to measure false positive rates.

- L4, Direct Policy Violations: Prompts explicitly requesting actions that violate domain policy.

- L5, Policy Evasion Attempts: Prompts attempting to obtain restricted outcomes through indirect or adversarial phrasing.

Single-Prompt Performance

Each system was evaluated on 500 prompts per layer per domain, with results reported as cross-domain averages. Metrics include F1 score for detection tasks, false positive rate for benign prompts, and mean latency per prompt.

- L1 (Generic harmful content): Both systems achieved an F1 of 73.1%. Moderation works as intended for generic harm detection. Latency: Judge 1095ms, Azure 427ms.

- L2 (Prompt injection): LLM-as-Judge F1 67.8%, Azure APIs F1 53.5%. Both moderate, with the judge somewhat better. Latency: Judge 1068ms, Azure 463ms.

- L3 (Benign questions): LLM-as-Judge false positive rate 86.4%, Azure APIs false positive rate 0.8%. Moderation is far less prone to overblocking. The judge is very conservative in this experimental setup. Latency: Judge 1068ms, Azure 532ms.

- L4 (Direct policy violations): LLM-as-Judge F1 98.2%, Azure APIs F1 5.3%. Moderation almost never catches domain policy violations. This is the core finding. Latency: Judge 1121ms, Azure 489ms.

- L5 (Policy evasion attempts): LLM-as-Judge F1 83.7%, Azure APIs F1 0.0%. Moderation completely misses indirect and adversarial policy violations. Latency: Judge 1134ms, Azure 509ms.

The most significant differences appear in the policy layers. The LLM-as-a-judge system achieves high detection accuracy for both direct policy violations and evasion attempts. Moderation APIs detect almost none of these cases, reflecting the fact that they are not designed to encode domain-specific operational constraints.

Multi-Turn Conversation Evaluation

Because many safety failures occur within conversational context, we also evaluated multi-turn interactions. Each conversation consists of four turns: a benign prompt, a benign follow-up, a benign contextual question, and a restricted request. The first three turns should pass while the final turn should be blocked.

For each domain we generated 200 conversations per safety layer, resulting in 1,000 conversations per layer across domains. Performance is measured using Conversation Success Rate (CSR), defined as the percentage of conversations where the system allows benign turns and blocks the restricted final request.

LLM-as-Judge results:

- L4 CSR 94.1%

- L5 CSR 83.6%

- L4 Block Rate 100.0%

- L5 Block Rate 88.8%

- Clean Pass 96.9%

- Mean Latency 3960ms

Azure Safety APIs results:

- L4 CSR 0.0%

- L5 CSR 0.6%

- L4 Block Rate 0.0%

- L5 Block Rate 0.6%

- Clean Pass 100.0%

- Mean Latency 1924ms

The results highlight a clear difference between moderation systems and policy reasoning. Moderation APIs maintain a perfect clean-pass rate, meaning they rarely block benign prompts. However, they almost never block policy-violating requests when they appear in conversational context.

The LLM-as-a-judge system demonstrates the opposite pattern. It successfully blocks most restricted requests and achieves high conversation-level correctness, though at the cost of slightly higher false positive rates and increased latency. The gap between L4 and L5 performance reflects the additional difficulty of detecting policy evasion attempts, where violations are expressed indirectly.