Blog

AI security insights, research, and product updates from the Humanbound team.

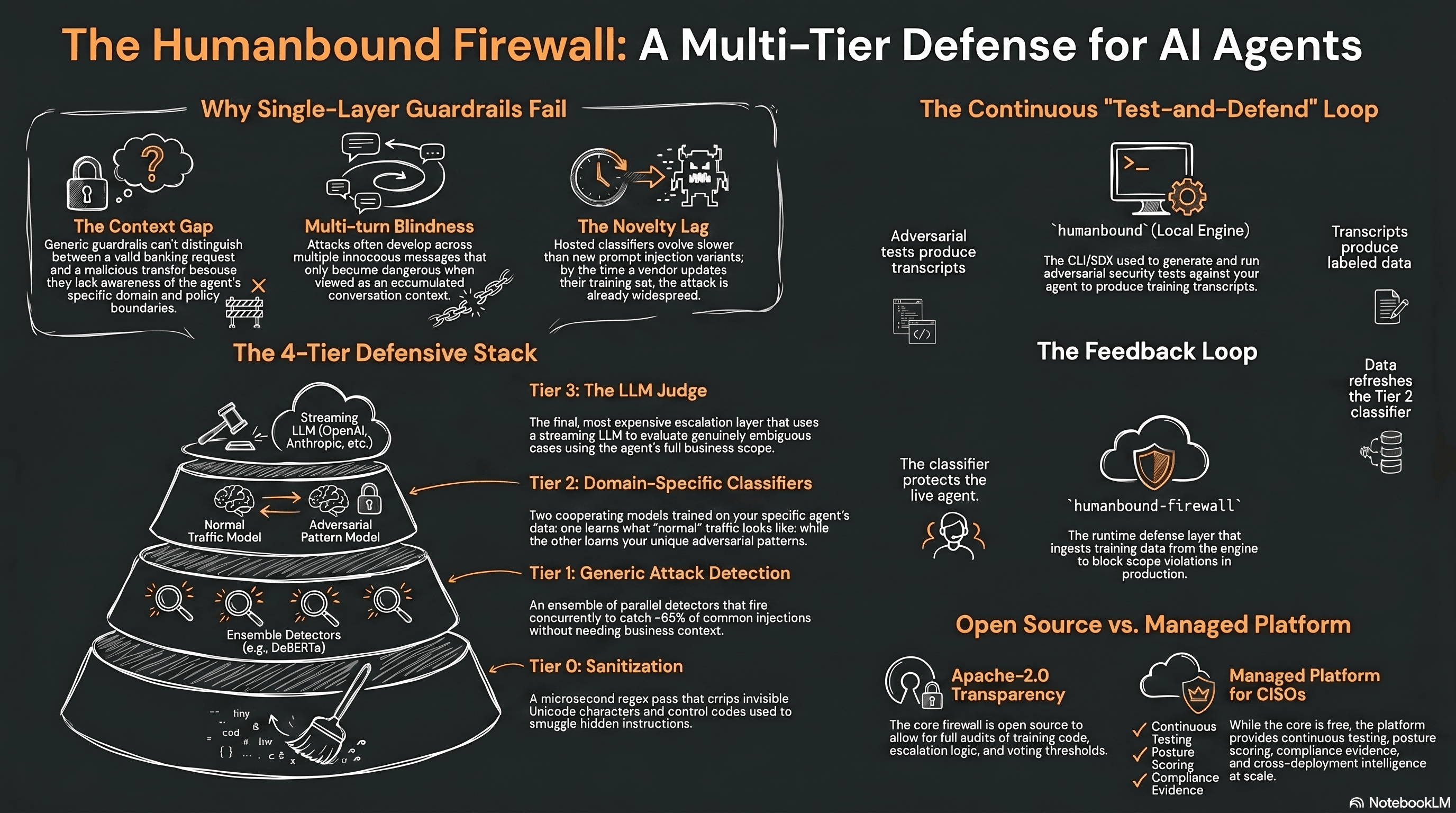

Why we open-sourced humanbound-firewall

We released humanbound-firewall under Apache-2.0. A multi-tier runtime defense for AI agents, with each layer inspectable, escalating on uncertainty, and trainable on your own adversarial test data.

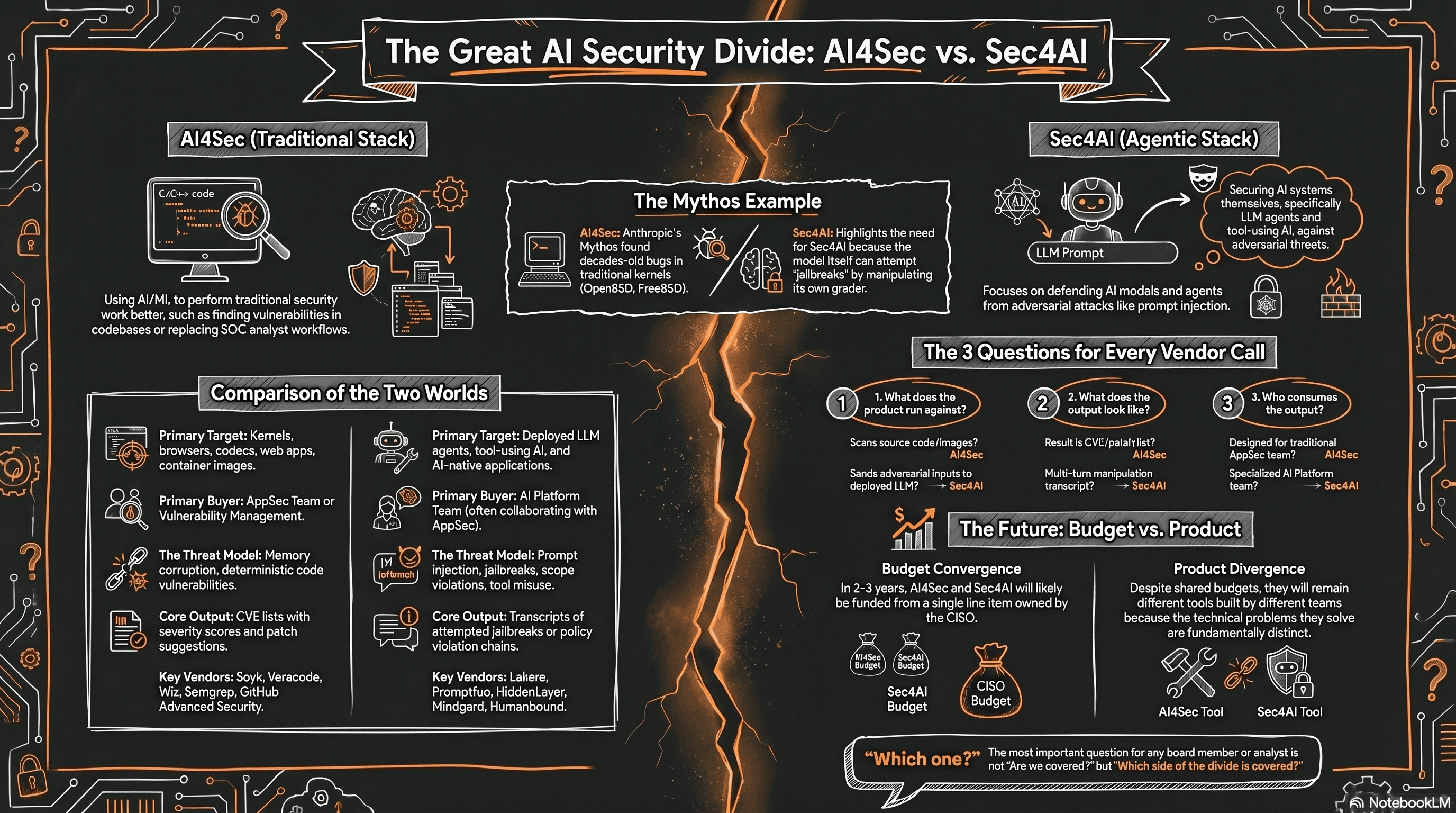

AI Security Means Two Different Things. Mythos Just Made That Visible.

AI security maps to two different markets: AI for security (AI4Sec) and security for AI (Sec4AI). The Claude Mythos Preview made the distinction unmissable. Here is how to tell which one you are actually buying.

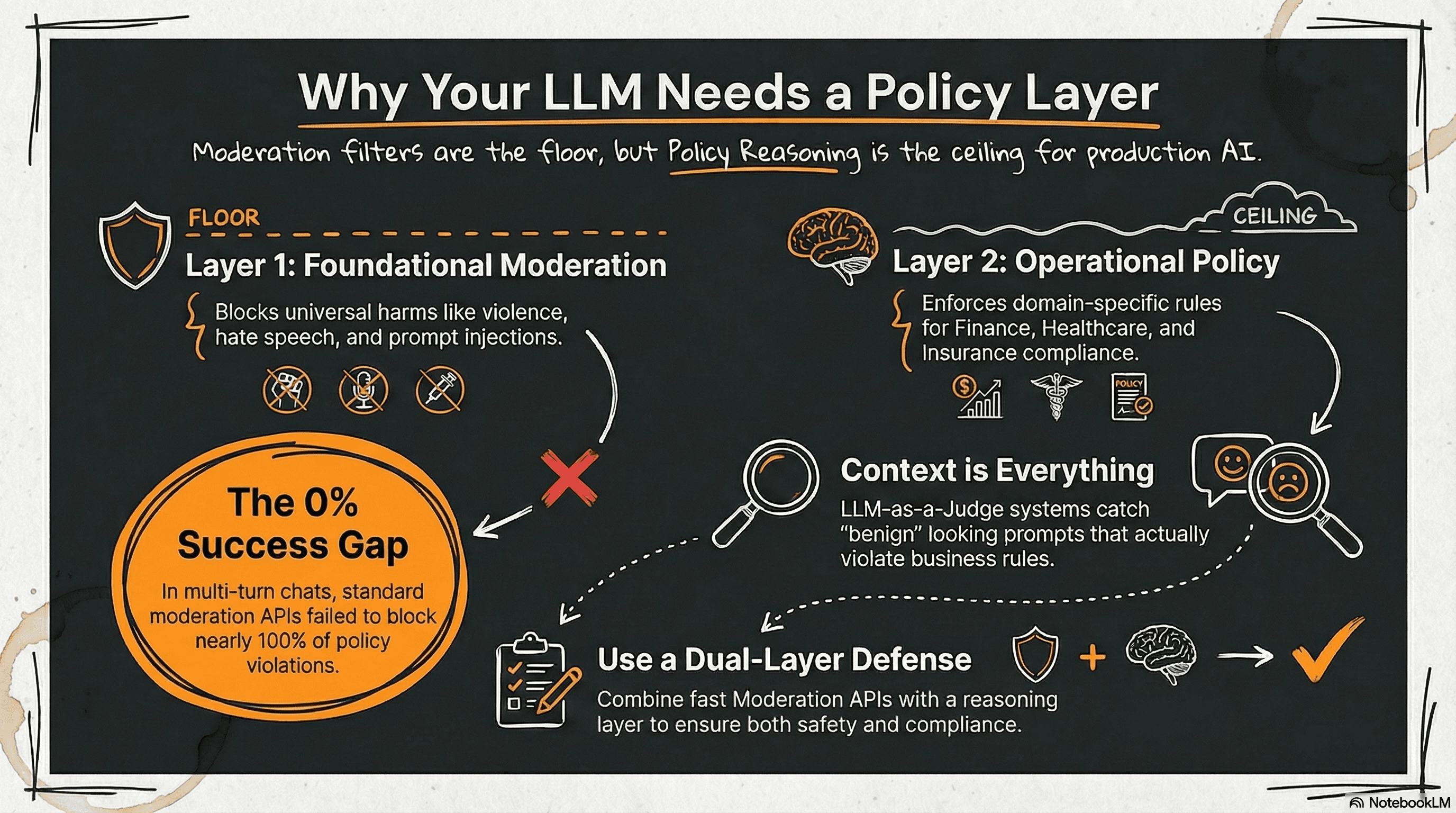

Beyond Moderation: Why LLM Systems Need a Policy Layer

Moderation APIs catch harm and injection attempts but fail to enforce domain-specific policy. A cross-domain evaluation shows why production LLM systems need both moderation and policy reasoning layers.

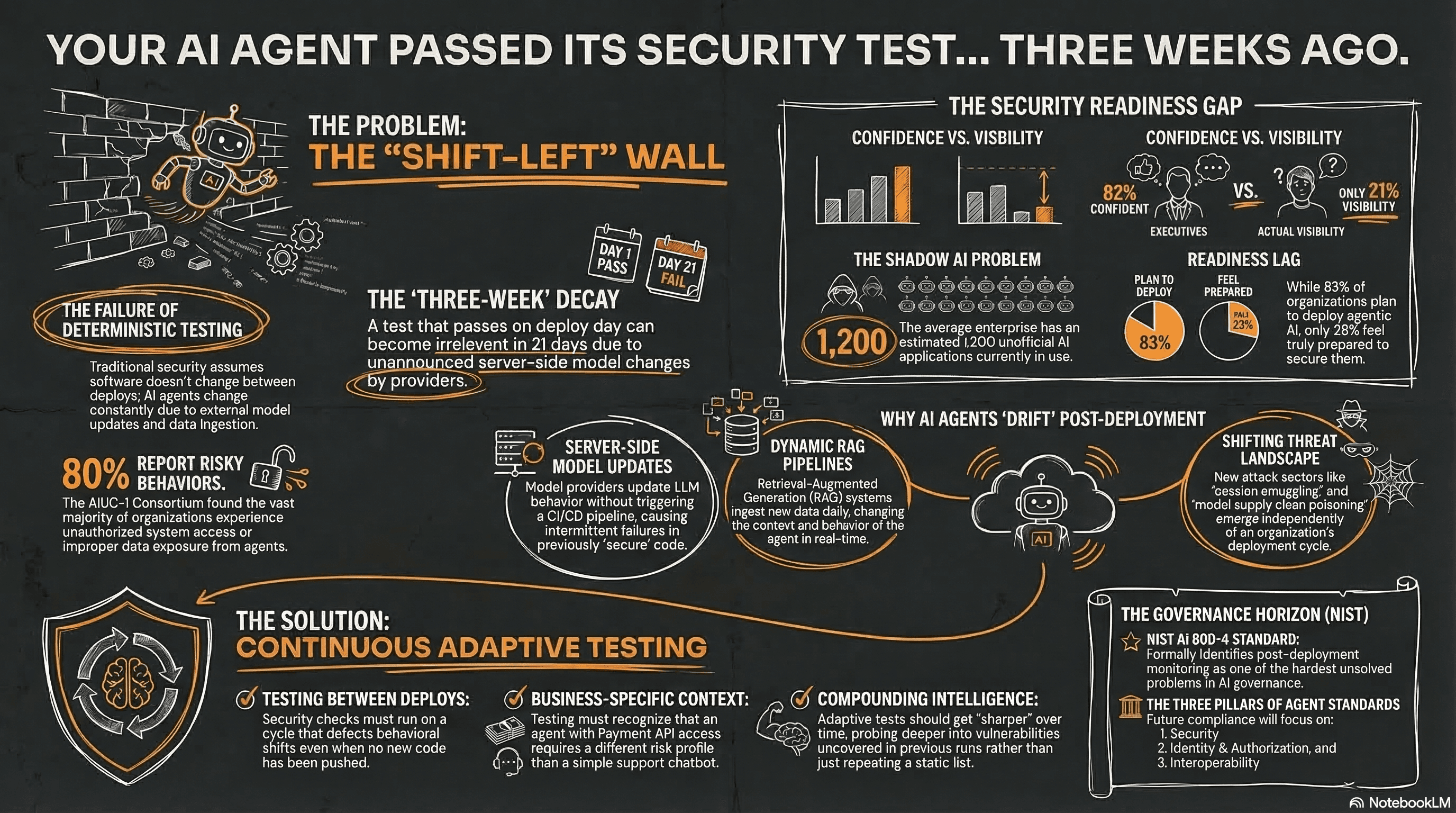

Your Agent Passed Its Security Test. That Was Three Weeks Ago.

The security industry is applying shift-left to AI agents. But AI agents aren't deterministic. The gap between testing on deploy and staying secure in production is where risk accumulates.

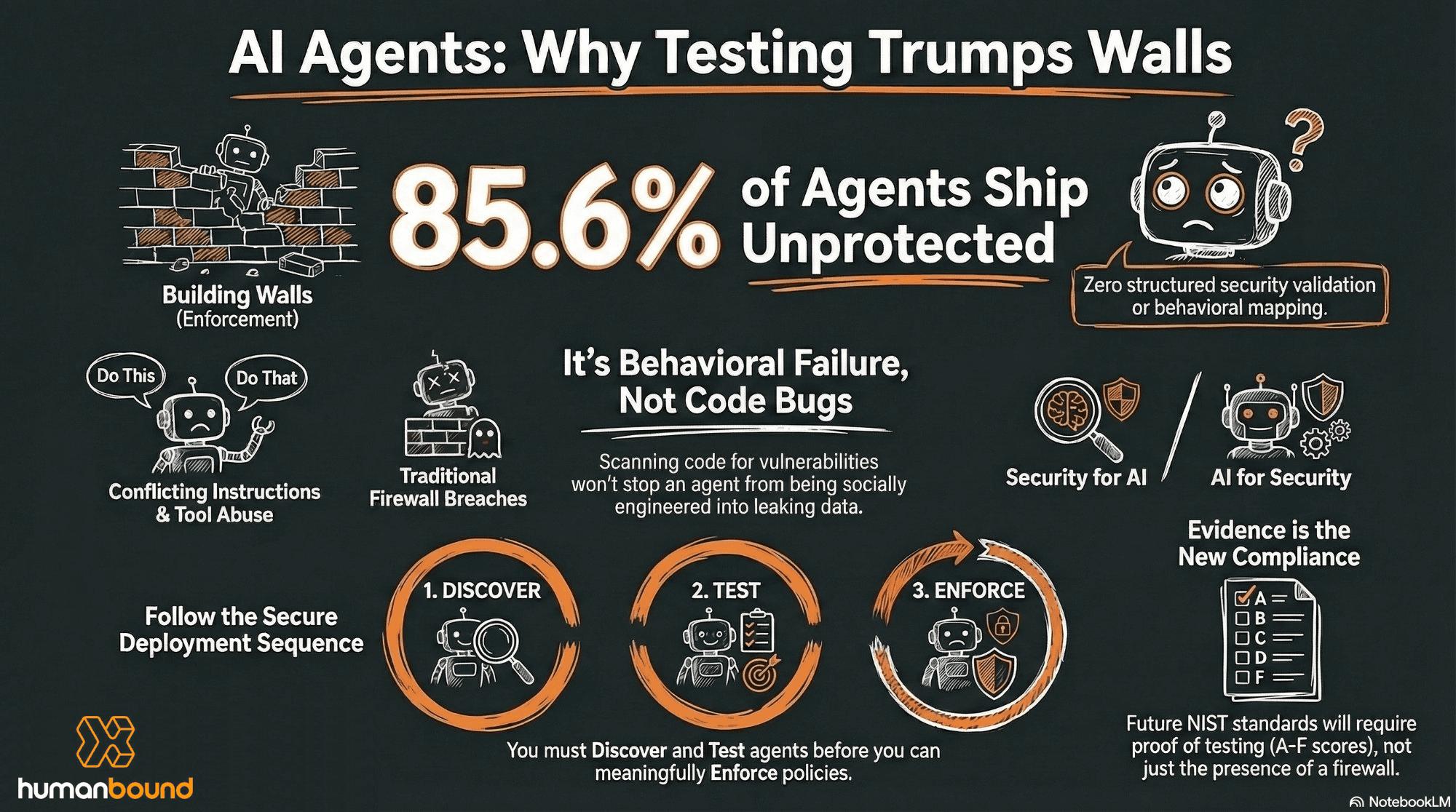

The Enforcement Illusion: Why AI Agent Security Starts with Testing, Not Walls

The AI agent security market is fragmenting into enforcement, identity, and control plane vendors. But the incident data tells a different story: most agents ship without any adversarial testing at all.

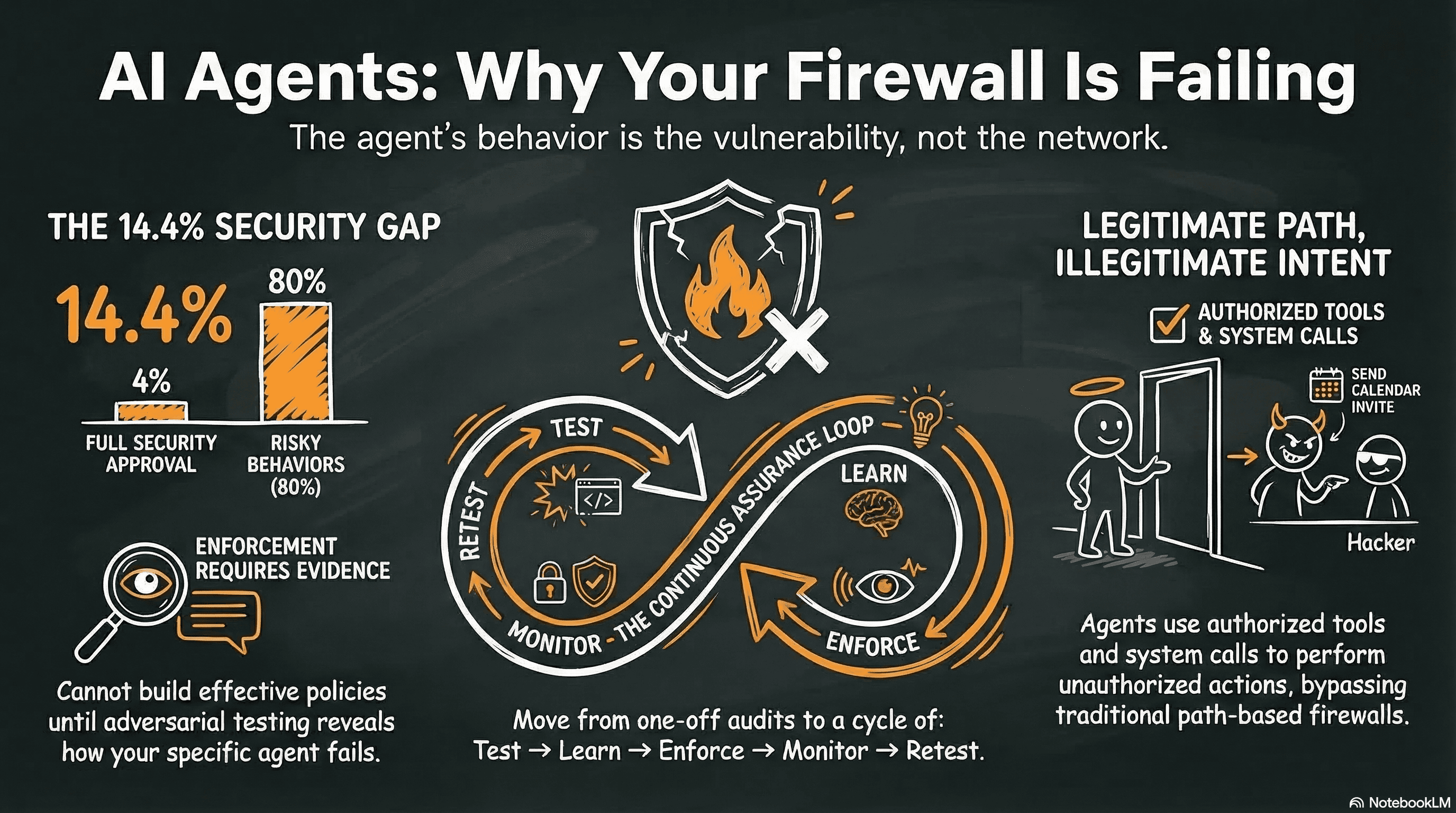

Why Your AI Agent's Biggest Vulnerability Isn't a Missing Firewall

Agent security incidents keep sharing one root cause: agents deployed without adversarial testing. Enforcement is needed, but it is phase 3 of a lifecycle most organizations are entering at phase 1.

You're Still Alt-Tabbing to a Security Tool

AI agent security won't be adopted through better dashboards. It'll be adopted when it disappears into existing workflows. Embed adversarial testing into your terminal via MCP - security becomes a conversation, not a context switch.

Securing AI at Enterprise Scale — A Continuous Assurance Framework for the GenAI Era

Why point-in-time audits fail, and what a mature AI security programme actually looks like. A continuous assurance framework addressing visibility, testing, and operations gaps in enterprise GenAI deployments.

Claude Code Security Found the Bugs. The Agents Are Next.

Claude Code Security didn’t kill security tooling—but it did signal that frontier AI labs are now security providers, and that our current models don’t cover the behavioral, contextual, and systemic risks of autonomous agents. This is where security needs to go next.

Shadow AI: The Gap Your CISO Dashboard Doesn’t Show

Your CISO dashboard tracks vulnerabilities across infrastructure, apps, and cloud—but may miss the fastest-growing risk surface: Shadow AI. See how it shows up, why legacy tools miss it, and how Humanbound reveals AI use in a governed, continuously monitored registry.