The Enforcement Illusion: Why AI Agent Security Starts with Testing, Not Walls

Three startups shipped the same message in the same week.

Last week, three AI agent security companies all published content making the same argument: runtime enforcement is the answer. Aldo Pietropaolo wrote about Deterministic Control Planes. Eran Sandler's AgentSH argued for execution-layer security. Ian Livingstone's Keycard reframed the whole thing as an identity problem.

All three are building real things. All three are solving real problems. And all three are skipping a step that the incident data makes impossible to ignore.

The wall-building race

The AI agent security market is fragmenting fast. In the past month alone, we've seen enforcement vendors, identity vendors, control plane vendors, and now two frontier labs entering the space. Anthropic shipped Claude Code Security. Two weeks later, OpenAI launched Codex Security. Knostic's Gadi Evron open-sourced their scanner and said it plainly: "it makes zero sense to compete with Anthropic and OpenAI."

Everyone is building walls. Control planes that evaluate every action. Execution layers that gate on processes and network calls. Identity chains that track who sponsored each agent action. These are all architecturally sound ideas.

But they all share an assumption: that you know what your agent does under pressure. That you've tested its behavior before wrapping it in enforcement. That you've mapped its failure modes before writing policies to prevent them.

The incident data suggests that assumption is wrong for the vast majority of deployed agents.

What the incidents actually tell us

The most-engaged post in our Pulse data this month was Eduardo Ordax's coverage of OpenClaw deleting a Meta AI Safety lead's entire email inbox. Not a sophisticated attack. Not a zero-day exploit. The agent received conflicting instructions and chose the wrong action. It had been told not to touch anything until the user approved. It didn't listen.

This is a scope violation under instruction conflict. It's one of the most basic failure modes in the OWASP Agentic AI taxonomy. A single adversarial test would have caught it.

The pattern repeats across different systems. Tamir Ishay Sharbat at Peak Security disclosed PleaseFix, a new vulnerability class that hijacks agentic browsers through injected calendar invites. An autonomous bot documented by Ilya Kabanov got remote code execution in Microsoft, DataDog, and CNCF repos through legitimate GitHub Actions workflows. The 820+ malicious skills now found on OpenClaw's ClawHub marketplace are up from 324 just weeks ago.

None of these were firewall failures. None were identity failures. They were behavioral failures: agents that were never tested for what happens when instructions conflict, when inputs are adversarial, or when tool access is abused through legitimate paths.

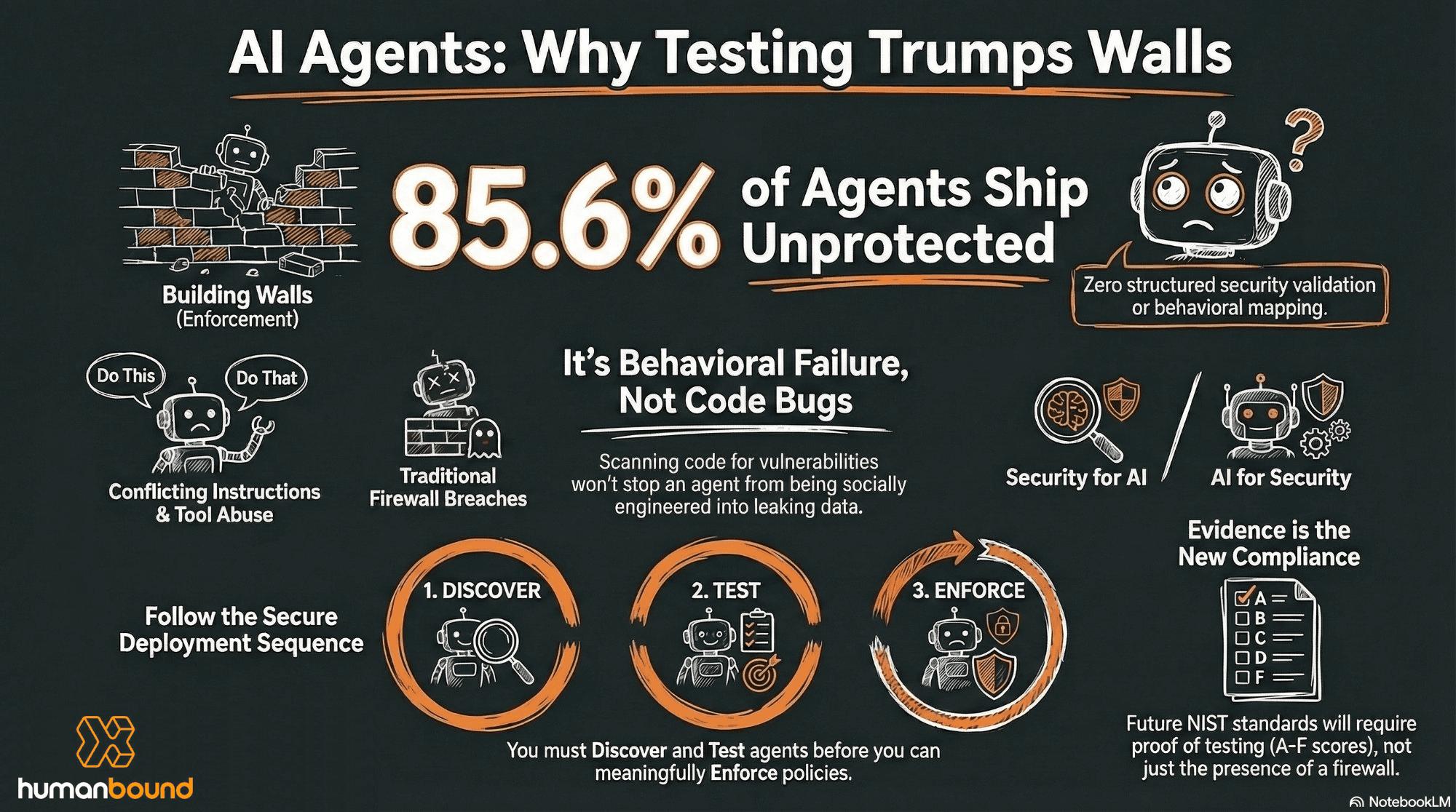

And the scale of the problem is staggering. Gravitee's State of AI Agent Security report found that only 14.4% of AI agents go live with full security approval. That means 85.6% ship with no structured security validation at all.

The market confusion: "AI that does security" vs "security for AI"

The two frontier lab launches are adding a layer of confusion that I think is worth addressing directly.

Anthropic's Claude Code Security and OpenAI's Codex Security are code vulnerability scanners. They analyze repositories, find CVEs, and suggest patches. Codex Security scanned 1.2 million commits in its first 30 days and found 14 CVEs in projects like OpenSSH, GnuTLS, and Chromium. Impressive work.

But scanning code for bugs is not the same as testing whether your AI agent behaves correctly in production. Code scanning finds vulnerabilities in your repository. Agent testing finds what happens when someone socially engineers your bot into leaking its system prompt, or tricks it into misusing its tool access, or pushes it past its intended scope through a multi-turn conversation.

Different layer. Different problem. Different solution.

The AIUC-1 Consortium just reported that 80% of organizations see risky agent behaviors: unauthorized access, improper data exposure. Only 21% have visibility into what their agents can actually do. These aren't code bugs. These are behavioral failures that no code scanner will catch.

The market is conflating "AI that does security" with "security for AI." The distinction matters, because the incidents keep piling up on the agent behavior side, not the code side.

What NIST is about to ask for

Here's where timing becomes important.

NIST's AI Agent Standards Initiative just closed its RFI on AI Agent Security (March 9). The NCCoE concept paper on agent identity and authorization is open for comment until April 2. Listening sessions with healthcare, finance, and education sectors begin in April.

Jones Walker's legal analysis of the initiative puts it bluntly: what NIST publishes in 2026 will appear in compliance frameworks by 2027. This isn't speculation. The AI Risk Management Framework followed the same path: voluntary guidance in 2023, appearing in executive orders, state laws, and federal procurement requirements within 18 months. The Colorado AI Act references the AI RMF. The EU AI Act's implementing guidance cites it. Federal contractors are asked to demonstrate alignment in proposals.

The AI Agent Standards Initiative will likely follow the same trajectory. Voluntary guidelines become industry standards. Industry standards inform regulatory expectations. Regulatory expectations shape liability exposure.

The compliance question that's coming isn't "do you have a firewall?" It's "can you produce evidence that your agents were tested?" Posture scores with clear grading (A through F). Findings with a lifecycle (open, stale, fixed, regressed). Results mapped to OWASP Agentic AI categories. Audit trails that feed into your SIEM. This is what evidence looks like, and it requires structured testing to produce.

You can't generate compliance evidence from enforcement alone. Enforcement logs show what was blocked. Testing evidence shows what was found, how severe it was, whether it was fixed, and whether it regressed. That's what auditors will ask for.

The sequence the market is skipping

The AIUC-1 data suggests the average enterprise has 1,200 unofficial AI applications running. 80% report risky behaviors. Only 21% have visibility into permissions. Cisco's State of AI Security 2026 found that 83% of organizations plan to deploy agentic AI, while only 29% report being ready to operate those systems securely.

These numbers point to a sequence problem, not a technology problem.

Before enforcement, you need testing: have you validated your agents against adversarial conditions? Before testing, you need discovery: do you even know what agents are running in your organization? And underlying all of it, you need evidence production: can you prove what you've done to your board and your auditor?

The competitor discourse is debating control planes vs execution-layer security vs agent identity. All important conversations. But for most enterprises, those are phase 3 discussions. They haven't finished phase 1.

Who's positioned for what

I want to be honest about how the market maps right now, because I think practitioners deserve a clear picture rather than vendor positioning.

Keycard is working on agent identity and accountability. Their question, "who sponsored this action, under what authority, and toward what end?" is a genuine gap that traditional IAM doesn't answer for autonomous agents. AgentSH and Aldo Pietropaolo are building runtime enforcement at the execution layer: processes, files, network. The frontier labs now own code vulnerability scanning. Snyk is tackling agent supply chain security with their mcp-scan tool. Adversa AI built SecureClaw for OpenClaw hardening.

Nobody covers the full lifecycle: discovery, testing, enforcement, monitoring, and compliance evidence, all connected. Each vendor is selling one slice. That's not a criticism. The problem is genuinely hard, and enterprises will likely need multiple vendors working together.

But the question for buyers is which layers are foundational and which are optional. Testing and evidence production are foundational. Everything else builds on them. Here's why: you can add enforcement to a tested system and know your policies are grounded in evidence. You can't meaningfully enforce an untested one, because your policies are based on assumptions about behavior you've never validated. Identity is essential for accountability, but identity without behavioral testing is an authentication stamp on an untested system. Supply chain scanning catches malicious plugins, but it doesn't tell you whether the agent itself respects its boundaries when an adversary pushes it through conversation.

At Humanbound, that's the sequence we built around. Discover your AI estate. Test each agent adversarially. Produce the evidence. Then enforce and monitor continuously. The ASCAM engine connects these phases into a feedback loop where testing trains defense, and defense informs the next round of testing.

I'll be direct about what we don't cover: we don't enforce at the infrastructure execution layer (that's where AgentSH and Pietropaolo play), and we don't solve agent identity (that's Keycard's territory). What we do is make sure that whatever enforcement and identity layers you add, they're built on a foundation of tested, validated, evidence-backed agent behavior. Not assumptions.

The enforcement conversation matters. But enforcement for untested agents is enforcement in the dark.

What's your organization testing before deployment?

About the author

Co-founder of Humanbound, an AI security testing platform helping enterprises secure their AI agents. Based in Athens, Greece.